Registering an Environment#

In the previous tutorial, we learned how to create a custom cartpole environment. We manually created an instance of the environment by importing the environment class and its configuration class.

Environment creation in the previous tutorial

# create environment configuration

env_cfg = CartpoleEnvCfg()

env_cfg.scene.num_envs = args_cli.num_envs

env_cfg.sim.device = args_cli.device

# setup RL environment

env = ManagerBasedRLEnv(cfg=env_cfg)

While straightforward, this approach is not scalable as we have a large suite of environments.

In this tutorial, we will show how to use the gymnasium.register() method to register

environments with the gymnasium registry. This allows us to create the environment through

the gymnasium.make() function.

Environment creation in this tutorial

sys.argv = [sys.argv[0]] + hydra_args

# PLACEHOLDER: Extension template (do not remove this comment)

def main():

"""Random actions agent with Isaac Lab environment."""

torch.manual_seed(42)

# parse configuration via Hydra (supports preset selection, e.g. env.sim.physics=newton_mjwarp)

env_cfg, _ = resolve_task_config(args_cli.task, "")

The Code#

The tutorial corresponds to the random_agent.py script in the scripts/environments directory.

Code for random_agent.py

1# Copyright (c) 2022-2026, The Isaac Lab Project Developers (https://github.com/isaac-sim/IsaacLab/blob/main/CONTRIBUTORS.md).

2# All rights reserved.

3#

4# SPDX-License-Identifier: BSD-3-Clause

5

6"""Script to an environment with random action agent."""

7

8import argparse

9import contextlib

10import sys

11

12import gymnasium as gym

13import torch

14

15import isaaclab_tasks # noqa: F401

16

17with contextlib.suppress(ImportError):

18 import isaaclab_tasks_experimental # noqa: F401

19from isaaclab.app import add_launcher_args, launch_simulation

20

21from isaaclab_tasks.utils import (

22 resolve_task_config,

23 setup_preset_cli,

24)

25

26# add argparse arguments

27parser = argparse.ArgumentParser(description="Random agent for Isaac Lab environments.")

28parser.add_argument(

29 "--disable_fabric", action="store_true", default=False, help="Disable fabric and use USD I/O operations."

30)

31parser.add_argument("--num_envs", type=int, default=None, help="Number of environments to simulate.")

32parser.add_argument("--task", type=str, default=None, help="Name of the task.")

33# append AppLauncher cli args

34add_launcher_args(parser)

35args_cli, hydra_args = setup_preset_cli(parser)

36sys.argv = [sys.argv[0]] + hydra_args

37

38# PLACEHOLDER: Extension template (do not remove this comment)

39

40

41def main():

42 """Random actions agent with Isaac Lab environment."""

43

44 torch.manual_seed(42)

45

46 # parse configuration via Hydra (supports preset selection, e.g. env.sim.physics=newton_mjwarp)

47 env_cfg, _ = resolve_task_config(args_cli.task, "")

48

49 with launch_simulation(env_cfg, args_cli):

50 # override with CLI arguments

51 env_cfg.scene.num_envs = args_cli.num_envs if args_cli.num_envs is not None else env_cfg.scene.num_envs

52 env_cfg.sim.device = args_cli.device if args_cli.device is not None else env_cfg.sim.device

53 if args_cli.disable_fabric:

54 env_cfg.sim.use_fabric = False

55

56 # create environment

57 env = gym.make(args_cli.task, cfg=env_cfg)

58

59 # print info (this is vectorized environment)

60 print(f"[INFO]: Gym observation space: {env.observation_space}")

61 print(f"[INFO]: Gym action space: {env.action_space}")

62 # reset environment

63 env.reset()

64 # simulate environment

65 sim = env.unwrapped.sim

66 while True:

67 if sim.visualizers:

68 # visualizer mode: run until the visualizer window is closed

69 if not any(v.is_running() and not v.is_closed for v in sim.visualizers):

70 break

71 # run everything in inference mode

72 with torch.inference_mode():

73 # sample actions from -1 to 1

74 actions = 2 * torch.rand(env.action_space.shape, device=env.unwrapped.device) - 1

75 # apply actions

76 env.step(actions)

77

78 # close the simulator

79 env.close()

80

81

82if __name__ == "__main__":

83 # run the main function

84 main()

The Code Explained#

The envs.ManagerBasedRLEnv class inherits from the gymnasium.Env class to follow

a standard interface. However, unlike the traditional Gym environments, the envs.ManagerBasedRLEnv

implements a vectorized environment. This means that multiple environment instances

are running simultaneously in the same process, and all the data is returned in a batched

fashion.

Similarly, the envs.DirectRLEnv class also inherits from the gymnasium.Env class

for the direct workflow. For envs.DirectMARLEnv, although it does not inherit

from Gymnasium, it can be registered and created in the same way.

Using the gym registry#

To register an environment, we use the gymnasium.register() method. This method takes

in the environment name, the entry point to the environment class, and the entry point to the

environment configuration class.

Note

The gymnasium registry is a global registry. Hence, it is important to ensure that the

environment names are unique. Otherwise, the registry will throw an error when registering

the environment.

Manager-Based Environments#

For manager-based environments, the following shows the registration

call for the cartpole environment in the isaaclab_tasks.core.cartpole sub-package:

import gymnasium as gym

from . import agents

gym.register(

id="Isaac-Cartpole",

entry_point="isaaclab.envs:ManagerBasedRLEnv",

disable_env_checker=True,

kwargs={

"env_cfg_entry_point": f"{__name__}.cartpole_manager_env_cfg:CartpoleEnvCfg",

"rl_games_cfg_entry_point": f"{agents.__name__}:rl_games_manager_ppo_cfg.yaml",

"rsl_rl_cfg_entry_point": f"{agents.__name__}.rsl_rl_ppo_cfg:CartpolePPORunnerCfg",

"rsl_rl_with_symmetry_cfg_entry_point": (

f"{agents.__name__}.rsl_rl_ppo_cfg:CartpolePPORunnerWithSymmetryCfg"

),

"skrl_cfg_entry_point": f"{agents.__name__}:skrl_manager_ppo_cfg.yaml",

"sb3_cfg_entry_point": f"{agents.__name__}:sb3_ppo_cfg.yaml",

},

The id argument is the name of the environment. As a convention, we name all the environments

with the prefix Isaac- to make it easier to search for them in the registry. The name of the

environment is typically followed by the name of the task, and then the name of the robot.

For instance, for legged locomotion with ANYmal C on flat terrain, the environment is called

Isaac-Velocity-Flat-Anymal-C-v0. The version number v<N> is typically used to specify different

variations of the same environment. Otherwise, the names of the environments can become too long

and difficult to read.

The entry_point argument is the entry point to the environment class. The entry point is a string

of the form <module>:<class>. In the case of the cartpole environment, the entry point is

isaaclab.envs:ManagerBasedRLEnv. The entry point is used to import the environment class

when creating the environment instance.

The env_cfg_entry_point argument specifies the default configuration for the environment. The default

configuration is loaded using the isaaclab_tasks.utils.parse_env_cfg() function.

It is then passed to the gymnasium.make() function to create the environment instance.

The configuration entry point can be both a YAML file or a python configuration class.

Direct Environments#

For direct-based environments, the environment registration follows a similar pattern. Instead of

registering the environment’s entry point as the ManagerBasedRLEnv class,

we register the environment’s entry point as the implementation class of the environment.

Additionally, we add the suffix -Direct to the environment name to differentiate it from the

manager-based environments.

As an example, the following shows the registration call for the cartpole environment in the

isaaclab_tasks.core.cartpole sub-package:

import gymnasium as gym

from . import agents

gym.register(

id="Isaac-Cartpole-Direct",

entry_point=f"{__name__}.cartpole_direct_env:CartpoleEnv",

disable_env_checker=True,

kwargs={

"env_cfg_entry_point": f"{__name__}.cartpole_direct_env_cfg:CartpoleEnvCfg",

"rl_games_cfg_entry_point": f"{agents.__name__}:rl_games_direct_ppo_cfg.yaml",

"rsl_rl_cfg_entry_point": f"{agents.__name__}.rsl_rl_ppo_cfg:CartpoleDirectPPORunnerCfg",

"skrl_cfg_entry_point": f"{agents.__name__}:skrl_direct_ppo_cfg.yaml",

"sb3_cfg_entry_point": f"{agents.__name__}:sb3_ppo_cfg.yaml",

},

)

Creating the environment#

To inform the gym registry with all the environments provided by the isaaclab_tasks

extension, we must import the module at the start of the script. This will execute the __init__.py

file which iterates over all the sub-packages and registers their respective environments.

import isaaclab_tasks # noqa: F401

In this tutorial, the task name is read from the command line. The task name is used to parse the default configuration as well as to create the environment instance. In addition, other parsed command line arguments such as the number of environments, the simulation device, and whether to render, are used to override the default configuration.

# parse configuration via Hydra (supports preset selection, e.g. env.sim.physics=newton_mjwarp)

env_cfg, _ = resolve_task_config(args_cli.task, "")

with launch_simulation(env_cfg, args_cli):

# override with CLI arguments

env_cfg.scene.num_envs = args_cli.num_envs if args_cli.num_envs is not None else env_cfg.scene.num_envs

env_cfg.sim.device = args_cli.device if args_cli.device is not None else env_cfg.sim.device

if args_cli.disable_fabric:

env_cfg.sim.use_fabric = False

# create environment

env = gym.make(args_cli.task, cfg=env_cfg)

Once creating the environment, the rest of the execution follows the standard resetting and stepping.

The Code Execution#

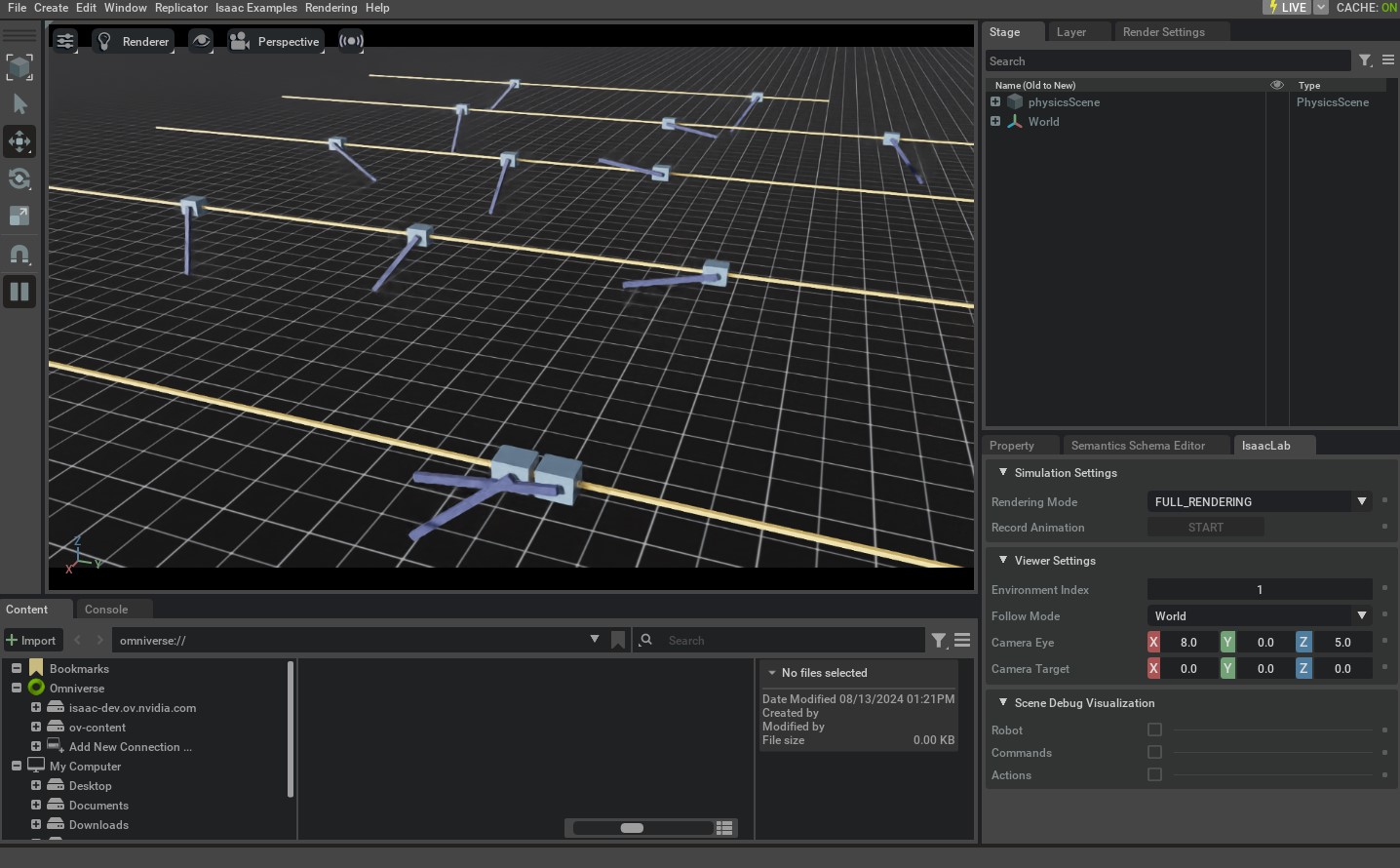

Now that we have gone through the code, let’s run the script and see the result:

./isaaclab.sh -p scripts/environments/random_agent.py --task Isaac-Cartpole --num_envs 32 --viz kit

This should open a stage with everything similar to the Creating a Manager-Based RL Environment tutorial.

To stop the simulation, you can either close the window, or press Ctrl+C in the terminal.

In addition, you can also change the simulation device from GPU to CPU by setting the value of the --device flag explicitly:

./isaaclab.sh -p scripts/environments/random_agent.py --task Isaac-Cartpole --num_envs 32 --device cpu --viz kit

With the --device cpu flag, the simulation will run on the CPU. This is useful for debugging the simulation.

However, the simulation will run much slower than on the GPU.